Word Embedding

Word embedding maps words to numbers, enabling the machine to process them. Word2Vec stands as one of the most prevalent neural network-based methods for word embedding.

Word2Vec

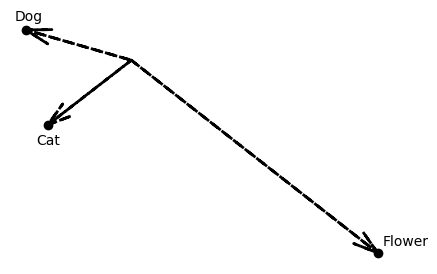

Word2Vec utilizes contextual information to understand the similarity between words. It is based on the assumption that words sharing similar contexts are themselves similar. Consequently, words deemed similar are represented by vectors that exhibit a high cosine similarity.

Word2vec encompasses two methods CBOW and skip-gram model.

CBOW

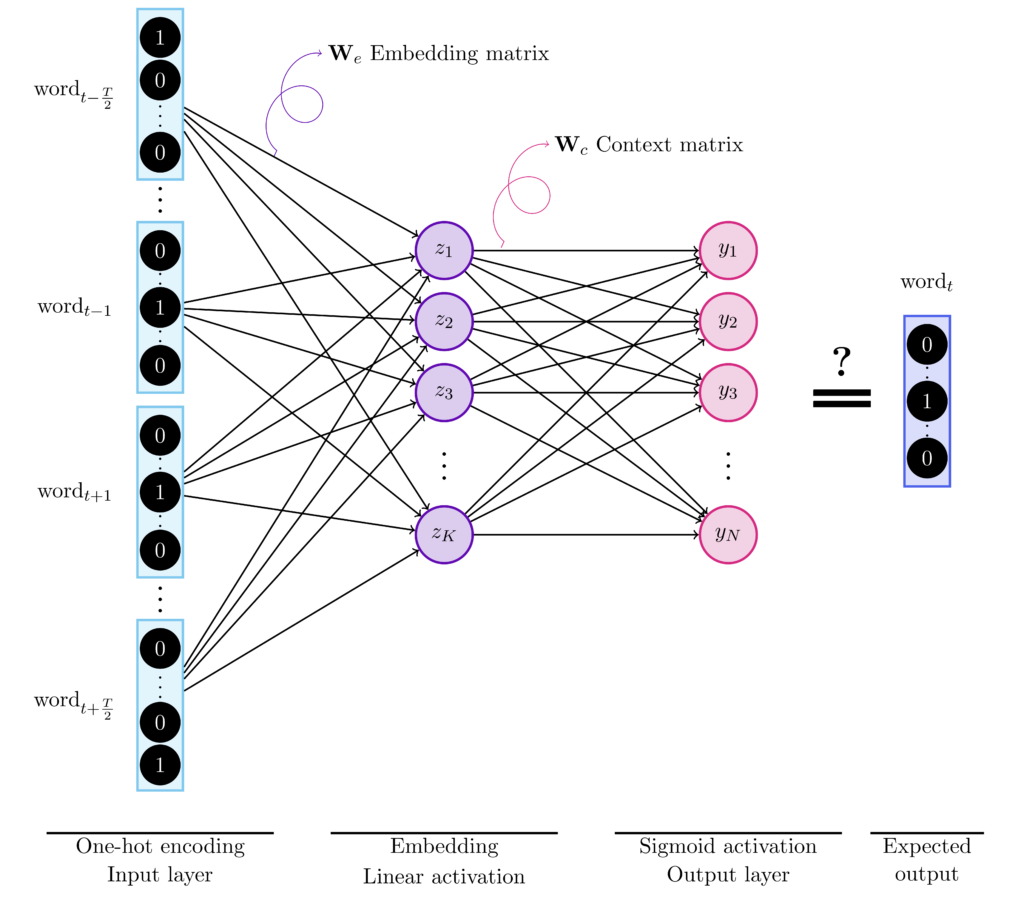

CBOW or continuous bag of words uses a sliding window of size $T$ and tries to infer a target word given the neighboring words.

Let us use as a corpus a paragraph from Alice’s adventures in wonderland by Lewis Carroll,

“Alice was beginning to get very tired of sitting by her sister on the

bank, and of having nothing to do: once or twice she had peeped into the book her sister was reading, but it had no pictures or conversations in it, ‘and what is the use of a book,’ thought Alice ‘without pictures or

conversation?'”

The words of this corpus will be one-hot encoded using a one to $N$ scheme, where $N$ refers to the size of the vocabulary or the number of unique words. For instance, “Alice” –> [1, 0, \dots] and “was”–> [0,1,0,\dots].

Then, if we consider a window of length $T = 4$ on the first sentence, the words “Alice”, “was”, “to”, “get” are the inputs to the CBOW architecture while the target is “beginning”.

The size of the input of the hidden layer, $K$, is the size of the embeddings that is usually set larger than 100. The output of the hidden layer is the word’s vector representation.

Skip-Gram

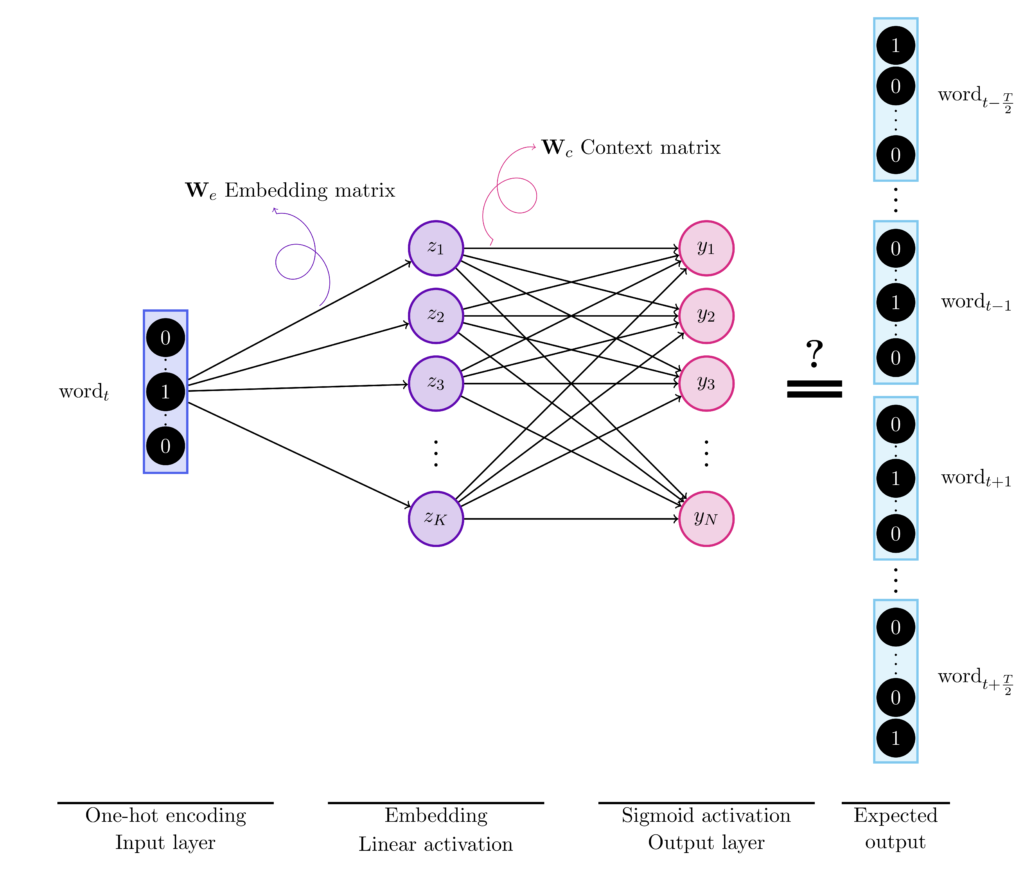

As opposed to CBOW, Skip-Gram infers the neighboring words given a single word.

If we consider the same example used for CBOW, for a window of length $T = 4$ on the first sentence, the word “beginning” is the input to the Skip-Gram architecture while the targets are “Alice”, “was”, “to”, and “get”.

Negative sampling

The Skip-Gram model’s training process is computationally intensive in comparison to Continuous Bag of Words (CBOW). Despite this, Skip-Gram captures better representations of rare words, which are words that occur less frequently. To improve training speed for Skip-Gram, Mikolov et al. introduced a technique known as negative sampling.

Skip-Gram involves inferring whether every word in the vocabulary is a neighboring word to the input or not. Negative sampling reduces the number of inferences needed to only $M+1$. For every data sample, apart from the target word, $M$ words are randomly selected from the dictionary. The model then distinguishes these selected words from the target word. Mikolov et al. recommend using the unigram distribution raised to the power of $\frac{3}{4}$ as the distribution to sample these words.

Frequent words

References

- Tomas Mikolov, Ilya Sutskever, Kai Chen, Greg Corrado, and Jeffrey Dean, "Distributed representations of words and phrases and their compositionality", NIPS'13.

- Tomas Mikolov, Kai Chen, Greg Corrado, and Jeffrey Dean, "Efficient Estimation of Word Representations in Vector Space", ICLR (Workshop Poster) 2013.